The Most Confidently Wrong AI Report I've Ever Read

The 2028 Global Intelligence Crisis report crashed all stocks mentioned. Here's what the Wall Street missed — and a tech person caught.

Two analysts publish “The 2028 Global Intelligence Crisis“ on Substack.

A fictional macro memo written in June 2028. AI displaces white-collar workers, consumer spending collapses, and financial markets crack.

By Monday morning, the Dow drops 800 points. And the companies named directly in the report take the hardest hits: DoorDash drops more than 7%; IBM falls 12%. (Check out this interactive page on how these stocks got affected at any given point in Feb 2026)

The same doomsday prophecy, while hurting some, benefits the others.

For example, Block announces it will cut 40% of its workforce — citing AI efficiency — and its stock surges 18%, illustrating the exact paradox the report had described.

It bothers me.

Not just the doomsday framing, nor the fact that it’s fiction dressed up as analysis.

But little smart people pushing back on it, the economists, the hedge fund analysts, the Bloomberg anchors, they focused on finance and economic terms.

Nobody was asking:

Do these two actually understand how the technology works?

I went through this 7000-word report line by line.

If you have passed 12th-grade math and are a frequent user of the internet, you’ll immediately recognize every single one of them I’m about to point out. I found 15. At the end, I’m going to ask you how many you spotted.

So pay attention, and let’s get into it.

Part 1: The Numbers Don’t Survive a Google Search

Flaw #1: 400,000 Tokens Per Day Per American

The Citrini report assumes we will all use at least 400,000 tokens per day.

Commerce stopped being a series of discrete human decisions and became a continuous optimization process, running 24/7 on behalf of every connected consumer. By March 2027, the median individual in the United States was consuming 400,000 tokens per day - 10x since the end of 2026.

Let’s make that concrete.

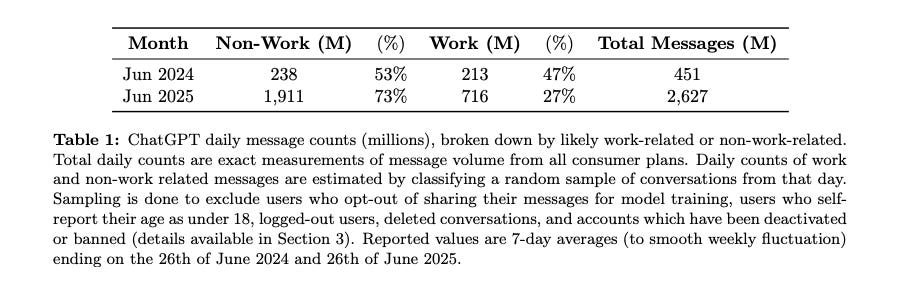

Here’s a report of how people use ChatGPT by OpenAI.

Roughly 3.7 messages per day per user. At ~500 tokens per exchange, that’s ~1,850 tokens per day for the median user — less than 0.5% of the Citrini number.

The only person I found who came close?

A Reddit user spent six weeks talking to ChatGPT nonstop while driving for work. Voice-to-text, all day, every day. He hit roughly 200,000 tokens a day. With non-stop bothering ChatGPT, he still only reached half the Citrini number.

So, the scenario the Citrini report describes requires you to use AI in your sleep.

Flaw #2: My Friend Said…

Now, I want to take this one seriously for a moment, because the Citrini report leans heavily on it.

A friend of ours was a senior product manager at Salesforce in 2025. Title, health insurance, 401k, $180,000 a year. She lost her job in the third round of layoffs. After six months of searching, she started driving for Uber. Her earnings dropped to $45,000.

Their friend, was a senior PM at Salesforce, earning $180k. Laid off. Now driving Uber for $45k.

If that’s real, I have genuine sympathy.

So my issue isn’t with the person, but how these two analysts are using one person’s career trajectory as evidence of an economy-wide structural collapse.

But let’s actually engage with Salesforce specifically, since they chose that example.

Yes, Salesforce cut 4,000 customer support roles in 2025, explicitly citing AI agents.

However, the report leaves out that Salesforce then pulled back from large language models entirely soon after, because only 6% of their customers adopted and paid for Salesforce’s AI. Even their own engineering team admitted their AI is too unpredictable.

Not to mention, in the meantime, the broader PM job market Up 12% year-over-year as of January 2026. Open PM roles on LinkedIn doubled in 12 months.

So the story they’ve told is: one person at one company during a specific restructuring cycle ends up driving Uber, therefore the entire white-collar economy collapses.

In statistics, we call this n=1.

Remember, this report moved the Dow 800 points. Based on math like this.

Now imagine what it looks like when they start talking about the system underneath.

Part 2: They Don’t Know A Thing About Enterprise Software

Flaw #3: One Week Build Your Own Saas

They claim:

In late 2025, agentic coding tools took a step function jump in capability.

A competent developer working with Claude Code or Codex could now replicate the core functionality of a mid-market SaaS product in weeks…

So let’s look at AI code maturity in early 2026. Not in demos but in production.

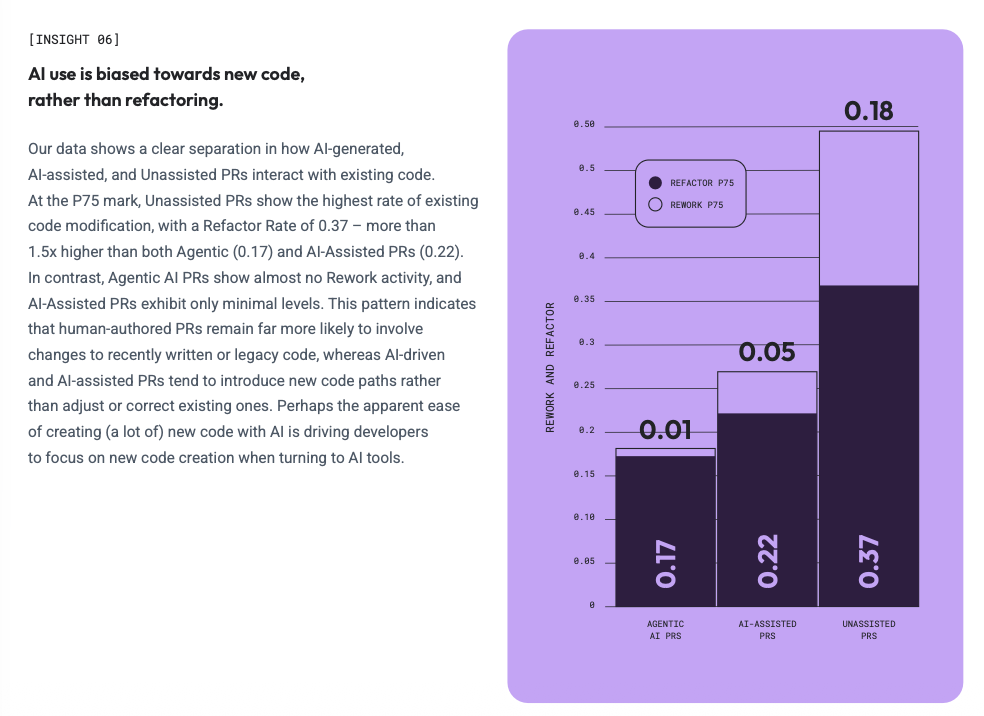

LinearB (a company that focuses on developer experience) tracked 8.1 million pull requests across nearly 5,000 engineering teams. Here’s what they found.

When human engineers write code, 84.4% of it gets merged. When AI writes code (all other conditions are the same), only slightly more than 30% made it to production. The report explains that the gap is because AI does not understand the system’s structure.

Two-thirds of it doesn’t make it past code review. But sure, let’s build a SaaS product and release it to your clients.

Flaw #4: All CIOs Buy the AI Hype

And even if AI could write the code, the Citrini report then assumes CIOs would rush to it:

… that the CIO reviewing a $500k annual renewal started asking the question “what if we just built this ourselves?”

These authors have probably never bought any enterprise software before, but it’s okay.

Let’s make it clear to them.

Real enterprise software is mostly maintaining, integrating, and enhancing the workflow of existing systems.

Exactly what AI avoids doing or can’t do.

It’s like a builder who can only add new rooms. Ask him to fix a cracked wall, and he’ll just build another wall in front of it.

Any CEO reading this, you should be really worried if your CIO’s first reaction is to replace all SaaS licenses with AI.

Flaw #5: SaaS Is Nothing But The User Layer

But fine, these two are likely not the only analysts who don’t get AI and the job of a CIO. Obviously, they also don’t get software in general.

The procurement manager told him he’d been in conversations with OpenAI about having their “forward deployed engineers” use AI tools to replace the vendor entirely. They renewed at a 30% discount…That was a good outcome, he said. The “long-tail of SaaS”, like Monday.com, Zapier and Asana, had it much worse.

Investors were prepared - expectant, even - that the long tail would be hit hard. They may have made up a third of spending for the typical enterprise stack, but they were obviously exposed. The systems of record, however, were supposed to be safe from disruption.

According to them, investors are piling their cash to bet against SaaS companies. If you have some software stocks in your hand, the worst thing you can do is to listen to these two.

Yes, as a user, all you see is the top layer — the boards, the timelines, the dashboards, and yes, AI can build that. But that’s only as thin as an apple peel will get on an apple.

What you actually rely on is everything else underneath.

Every project, every task, every decision your company has ever logged. Don’t even get me started with the SOC 2 certification, GDPR compliance, and audit trails your legal team depends on.

Those integrations didn’t take a weekend. They’re woven into your org’s permissions, your approval flows, your automations — built over years by your tech team, trained on for months by your ops team.

Flaw #6: Likely Never Heard of “Legacy System”

And it goes deeper than either of them realizes.

We’re talking about technology so old, it could be their dad.

The best example is COBOL. Code written in the 1960s, you’ve likely never heard of it, but you still use services that are built on top of it.

Every time you withdraw cash from an ATM, that transaction is almost certainly running on COBOL. 95% of all ATM transactions, 80% of in-person credit card swipes — all COBOL. Pull it out of the financial system tomorrow, and you can’t take money out, nor pay for your groceries.

Apart from ATM, 90% of Fortune 500 business systems still run on it.

Banks have been trying to migrate off COBOL for 30 years. Simply put, they can’t. The cost is too high. TSB Bank in the UK estimated that it’d cost them £300+ million to migrate.

The people who wrote the original code are retired or dead. The documentation doesn’t exist. And every time someone tries to modernise it, they find another tentacle they didn’t know about.

Now here’s where AI makes it worse, not better.

Because COBOL has the least information on the internet, AI’s code success rate on this is much worse than modern languages.

Until now, I’ve found that these two don’t get AI, they don’t get legacy systems, fine, different industry.

But what’s worse is that they are blind to how the economy functions.

Part 3: They See the Apple Peel, and Think That’s the Apple.

The next 3 flaws all share the same blind spot, so I’ll start with framing.

Every industry that’s supposedly been disrupted runs on invisible infrastructure you’ve never seen. Infrastructure built over decades, costing billions of dollars.

The app, the website, the interface, is maybe 5% of what’s actually there.

An AI agent you sent out to complete a task relies on every single software infrastructure in place, like having someone standing at the checkout counter. The AI can be as smart as it wants, though none of the shopping happens without the items and the supply chain behind it.

What the Citrini report does, over and over, is look at that checkout counter and conclude the whole supermarket is replaceable.

Let me show you.

Flaw #7: Vibe-Coded Alternatives to Replace DoorDash

Agents accelerated both sides of the destruction. They enabled the competitors and then they used them. The DoorDash moat was literally “you’re hungry, you’re lazy, this is the app on your home screen.” An agent doesn’t have a home screen. It checks DoorDash, Uber Eats, the restaurant’s own site, and twenty new vibe-coded alternatives so it can pick the lowest fee and fastest delivery every time.

The report describes the frontend of a food delivery app (DoorDash) — once again — and concludes the whole thing is replaceable.

I used to run a large portion of the global operational technology for Just Eat Takeaway, so let me explain.

However, to make the app work, DoorDash had to physically go restaurant by restaurant, city by city, and convince thousands of independent businesses to join. Not to mention how much time they invested to help mom and dad shop to configure their delivery radius, photographing the menu, and training their staff using the tablet.

That supply network took 25 years and billions of dollars to build.

And now you tell me a vibe-coded startup can replicate that in weeks? Yes, you can build the app in weeks, but it’ll have no shops and no food.

Flaw #8: A Total Rational All-Knowing System?

And it’s not just that food delivery is doomed, apparently. The report says:

Travel booking platforms were an early casualty, because they were the simplest. By Q4 2026, our agents could assemble a complete itinerary (flights, hotels, ground transport, loyalty optimization, budget constraints, refunds) faster and cheaper than any platform.

They didn’t get the memo that we’ve all had for three years now; AI agents hallucinate. For instance, it’ll tell you the booking is confirmed, while the reality is that it got stuck and the booking was never completed.

But let’s set that aside.

What do you think sits underneath every travel booking tool — Skyscanner and Google Flights?

It’s not AI scraping one after another airline website in real time.

That would be astronomically expensive, and most websites would block it within minutes.

Without Skyscanner, your AI is useless.

On top of that, “the worst-case scenario“ mentioned in the report is actually the best-case scenario for these platforms, since they no longer need to pay for the expensive user research and UX design.

SaaS businesses’ AI-era job is to make sure the machine can find the right information efficiently.

Flaw #9: Let AI Handle Transactions

Agentic commerce routing around interchange posed a far greater risk to card-focused banks and mono-line issuers, who collected the majority of that 2-3% fee and had built entire business segments around rewards programs funded by the merchant subsidy.

The report assumes AI agents will route payments through crypto, bypassing credit card fees. Crypto payments have existed for over a decade. Merchants, regulators, and consumers have consistently rejected them for everyday transactions.

For this to work, you’d need mass crypto wallet adoption, regulatory approval of stablecoins at scale, merchant acceptance, and fraud infrastructure rebuilt from scratch — all within their laughable 12-month timeline.

Banks exist to protect you when things go wrong. The report doesn’t seem to understand that.

They also started talking about how white-collar people would all become delivery drivers, started the sentence with, “This was oddly poetic“…

For this, I agree.

It is oddly poetic how a supposed professional analyst didn’t even do their homework.

Part 4: Even If the Tech Worked, the Real World Won’t Allow It

Flaw #10: On-Device Handles All Your Decisions

The 2028 Crisis paints a picture of distilled models running on your phone that will quietly handle your shopping in the background.

Now ask yourself, what have the device companies been doing?

Apple spent three years and billions of dollars building on-device AI for one explicit reason: to make sure your data never leaves your device. Same as Google.

Their Private Cloud Compute architecture is built around a single guarantee: your data must never be available to anyone, at any time, unless you consent to it.

Not to mention that the on-device AI model on your iPhone is typically smaller.

It isn’t altruism from Google or Apple, of course. It’s GDPR. It’s CCPA. It’s a decade of regulatory pressure forcing every platform owner to build privacy into the architecture.

So while the analysts dream of a world where all merchants get to access your data via AI, two of the most powerful technology companies on earth already decided this system shouldn’t exist.

Flaw #11: Mistaken Tedium for Value

And even beyond the devices, they also misunderstand how the finance industry works:

Financial advice. Tax prep. Routine legal work. Any category where the service provider’s value proposition was ultimately “I will navigate complexity that you find tedious” was disrupted, as the agents found nothing tedious.

This sounds clever. But it’s wrong about what these professions actually sell.

Let me use tax as the example, because it’s the clearest.

When you hire a CPA to file your taxes, you’re not just paying them to find the right boxes to fill in. You could Google that. What you’re actually paying for is this: if the IRS comes knocking, there is a licensed human being who signed their name to your return and is legally responsible for what’s on it.

I doubt that the IRS will accept “the AI told me to“ as a defence.

In fact, the IRS’s own internal watchdog has explicitly warned that AI tools can misstate your eligibility for credits and deductions, and that you, the taxpayer, remain fully liable for every number on your return.

AI isn’t going to jail for you.

The two authors mistake the tedium of these jobs for their value. The tedium is just the surface. Underneath it is a licensed, regulated, legally accountable human whose name is on the line.

So the tech doesn’t work how they described, and the real world wouldn’t allow what they’re predicting. But maybe the data support their thesis?

Part 5: The Data Says the Exact Opposite

Unfortunately, data also doesn’t side with them.

Flaw #12 and #13: White-Collar and Indian Outsource Firms Lose Their Jobs

The US economy is a white-collar services economy. White-collar workers represented 50% of employment and drove roughly 75% of discretionary consumer spending. The businesses and jobs that AI was chewing up were not tangential to the US economy, they were the US economy.

Let’s look at what the data actually says. Right now. In the real world.

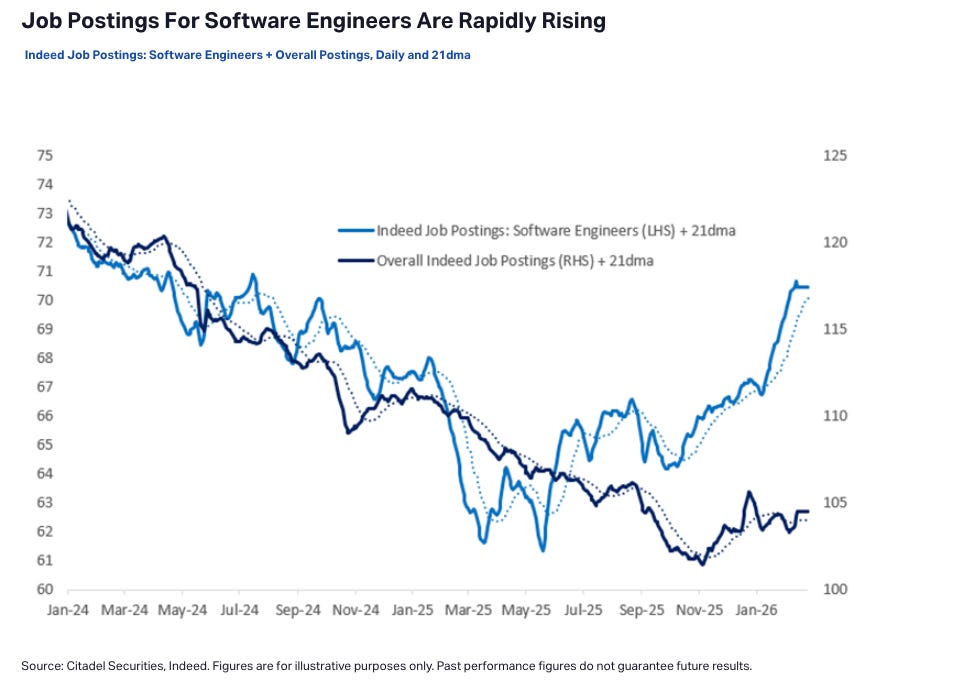

This chart is from Citadel Securities, Indeed’s live job posting data. Software engineer postings are up 11% year-over-year, and sharply accelerating into early 2026.

If AI were already replacing software engineers at the pace the report describes, this chart would look very different.

But let’s not just rely on one market. Let’s go to the country the Citrini report says would be hit hardest, India.

India was the inverse. The country’s IT services sector exported over $200 billion annually, the single largest contributor to India’s current account surplus and the offset that financed its persistent goods trade deficit. The entire model was built on one value proposition: Indian developers cost a fraction of their American counterparts. But the marginal cost of an AI coding agent had collapsed to, essentially, the cost of electricity. TCS, Infosys and Wipro saw contract cancellations accelerate through 2027.

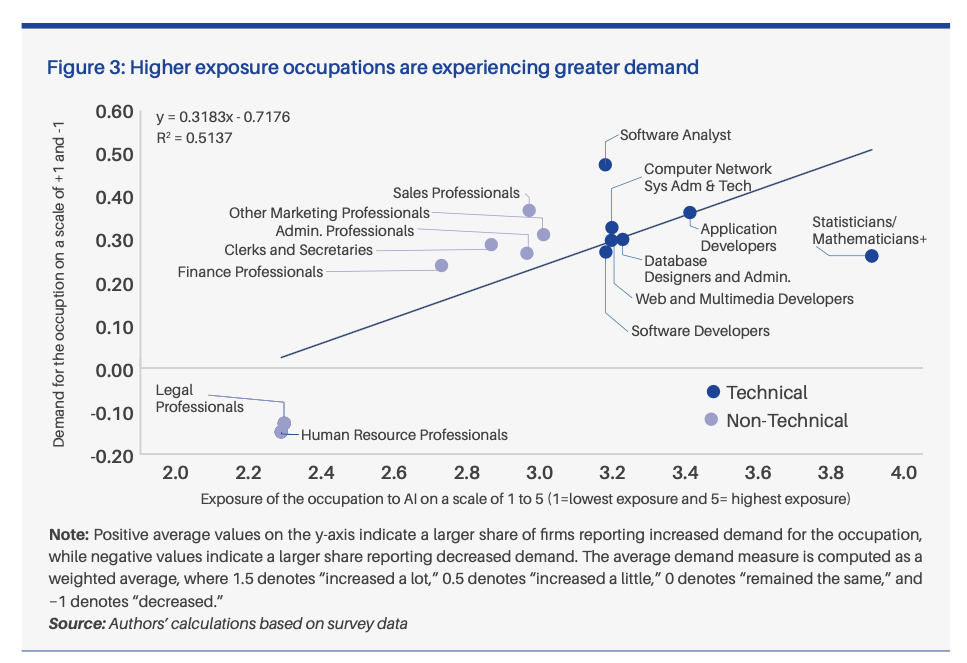

ICRIER, India’s top economic research institution, recently released a study surveying 600+ IT firms, together with OpenAI.

They found no evidence of large-scale job losses.

The completely opposite: the jobs most exposed to AI — software analysts, application developers, statisticians — are experiencing the strongest growth in demand.

Now here’s the part that should make the Citrini authors uncomfortable. The ICRIER report does find job losses — but where?

Finance professionals, the exact sector in which the Citrini Research analysts sit.

If you work in tech, finance, or any field that this Citrini 2028 Crisis says is about to collapse, are you seeing what they describe? Or the exact opposite? Share your thoughts in the comments.

Flaw #14: Compare Apples and Oranges

Everyone remembers what happened after the downgrade. Industry veterans had already seen the playbook following the 2015 energy downgrades.

They compare a potential software debt crisis to, and I’m not making this up, the 2015 energy sector downgrades.

Now. I’m a tech person. I don’t do this for a living.

But even I know that comparing software debt to energy debt in 2015 requires you to explain one thing first: are the capital structures remotely similar?

Because here’s what the data actually shows.

The 2015 energy crisis worked because energy companies had borrowed massively against oil reserves at $100 a barrel, and when oil crashed to $30, the collateral evaporated overnight. It was a leverage crisis with a specific trigger.

Software in 2026 is not leveraged against a commodity price.

You don’t get to be confidently wrong about technology AND confidently wrong about finance in the same report.

Pick a lane.

Flaw #15: AI is Smarter Than Humans?

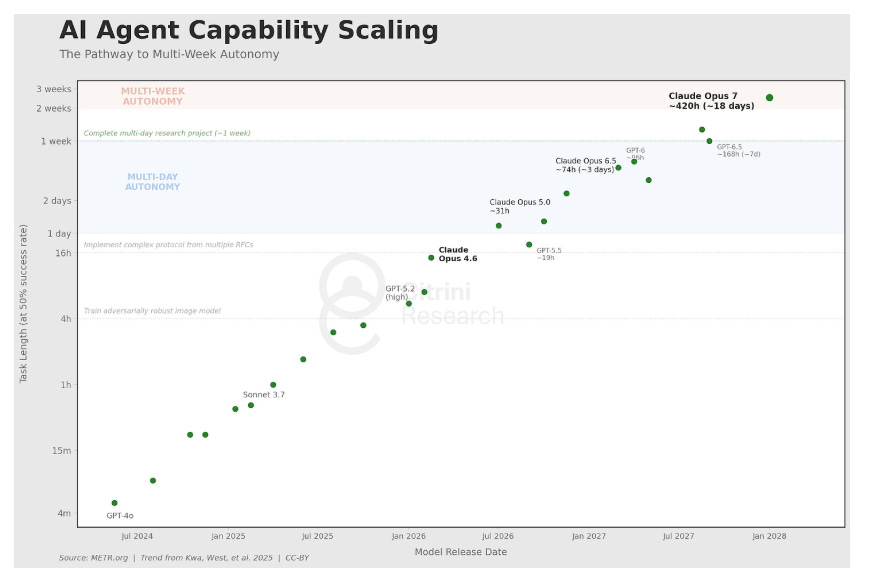

And people traded real money based on what they pointed out in this chart.

We are now experiencing the unwind of that premium. Machine intelligence is now a competent and rapidly improving substitute for human intelligence across a growing range of tasks. The financial system, optimized over decades for a world of scarce human minds, is repricing. That repricing is painful, disorderly, and far from complete.

They even watermarked this one. I genuinely don’t know who they thought was going to steal a poorly made-up chart with wrong assumptions.

It does, based on real data, I give them that much, but this is also about where the credit stops.

It comes from METR, a well-known AI research firm.

The y-axis isn’t “tasks AI can do.” It’s tasks AI completes half the time. Right now, that number is a 10-hour task.

Here’s an analogy. If you ask Claude Opus to make a £10,000 transaction for you, there is only a 50% chance that this money will land in your other account.

However, Citrini took the data and twisted the math. And say AI now can handle “long research and development tasks“ — they’ve entirely ignored that those tasks failed half the time.

They then extrapolated it to 2028 and used it to predict the collapse of the global economy.

Two Pennies From A Tech Person

Every single flaw we’ve walked through — the token math, the enterprise iceberg, the invisible infrastructure, the regulations, the data that says the opposite — they all share the same root cause.

The authors consistently read the surface of a technology, assumed the surface was the technology, and built a 7,000-word market-moving, poorly researched science fiction on top of that assumption.

The chart at the end is just the most honest version of what they’ve been doing all along: extrapolating confidently from a number whose footnote says “this is only true half the time.”

Curious, many flaws did you spot before I pointed them out? (0-5 / 6-11 /12+)