What Apple's AI Crackdown Got Right

Apple didn't create the problem. They just refused to take responsibility for it.

Hey,

Sorry, technical difficulty.

Here’s your Sunday reading, but if you have already received it on Friday, extra weekend!

Apple just did something the AI world didn’t expect. They pulled AI vibe-coding apps from the App Store, which is essentially saying: we don’t trust what these tools produced.

If you bet on SaaS stocks getting hammered further, with pure fear that AI is about to make every software company obsolete, Apple just showed you why the fear is irrational.

I went through several highly reputable software trend reports, security audits, and academic research to understand what’s actually happening when AI code meets the real world.

I found four undeniable reasons and much supporting data that the “AI kills SaaS” argument is wrong, and I’ll walk you through each one.

And yet, that’s the opposite of what the stock market is pricing in right now.

But first, what Apple actually did, and the detail that most of the coverage missed.

Apple and The Vibe Coding Drama

Two things happened in quick succession.

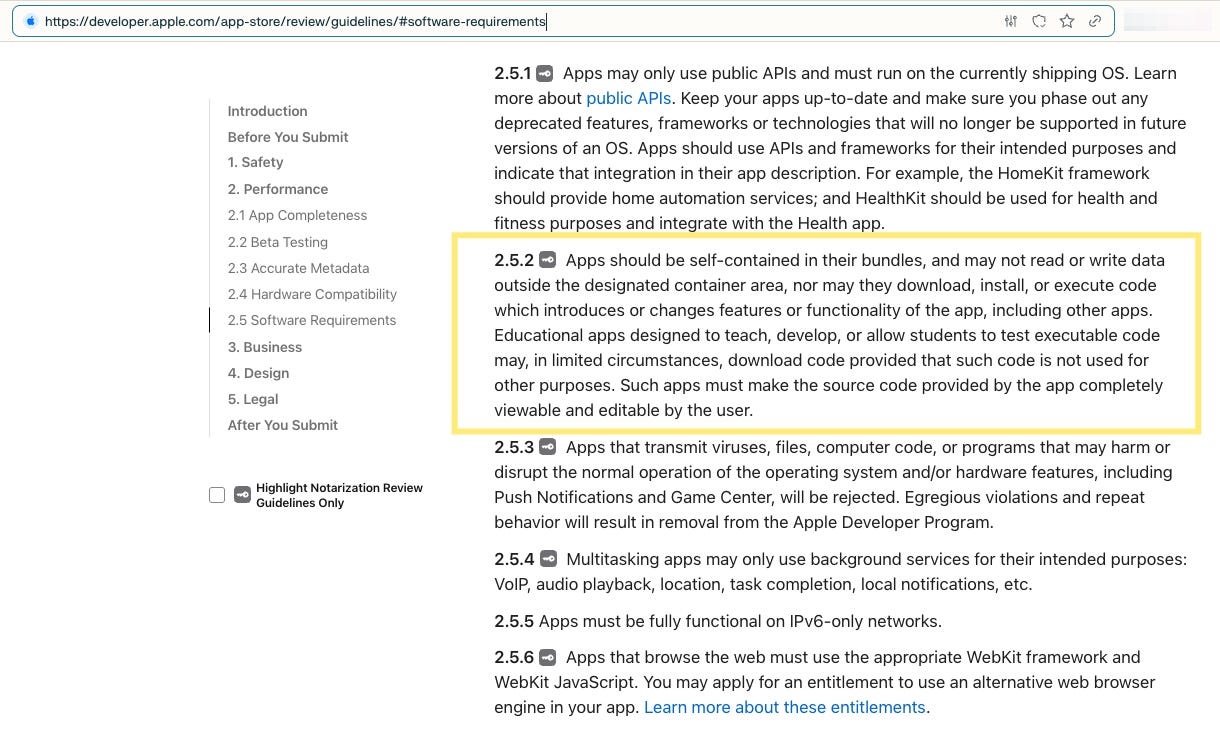

Within a few weeks in March ‘26, Apple quietly blocked updates to Replit and other vibe-coding platforms, even removed Anything entirely from the App Store, citing Guideline 2.5.2.

This is actually Apple’s longstanding rule that apps can’t download, install, or execute code that changes their features after review.

Some of these apps were only restored by April 3rd.

The CEO of Epic Games called it stifling innovation.

As many other news outlets, including CNBC and The Information, said, Apple was on the wrong side of history. Of course, the company, Anything, that got taken down, said it was surprised and disappointed.

These are emotionally powerful arguments. But they all assume that the AI vibe-coding tools are producing high-quality, harmless apps. And the thing most of the coverage missed is why that assumption is fatally flawed.

Now, here’s the flip side of the story that the critics’ anti-innovation claim conveniently forgets.

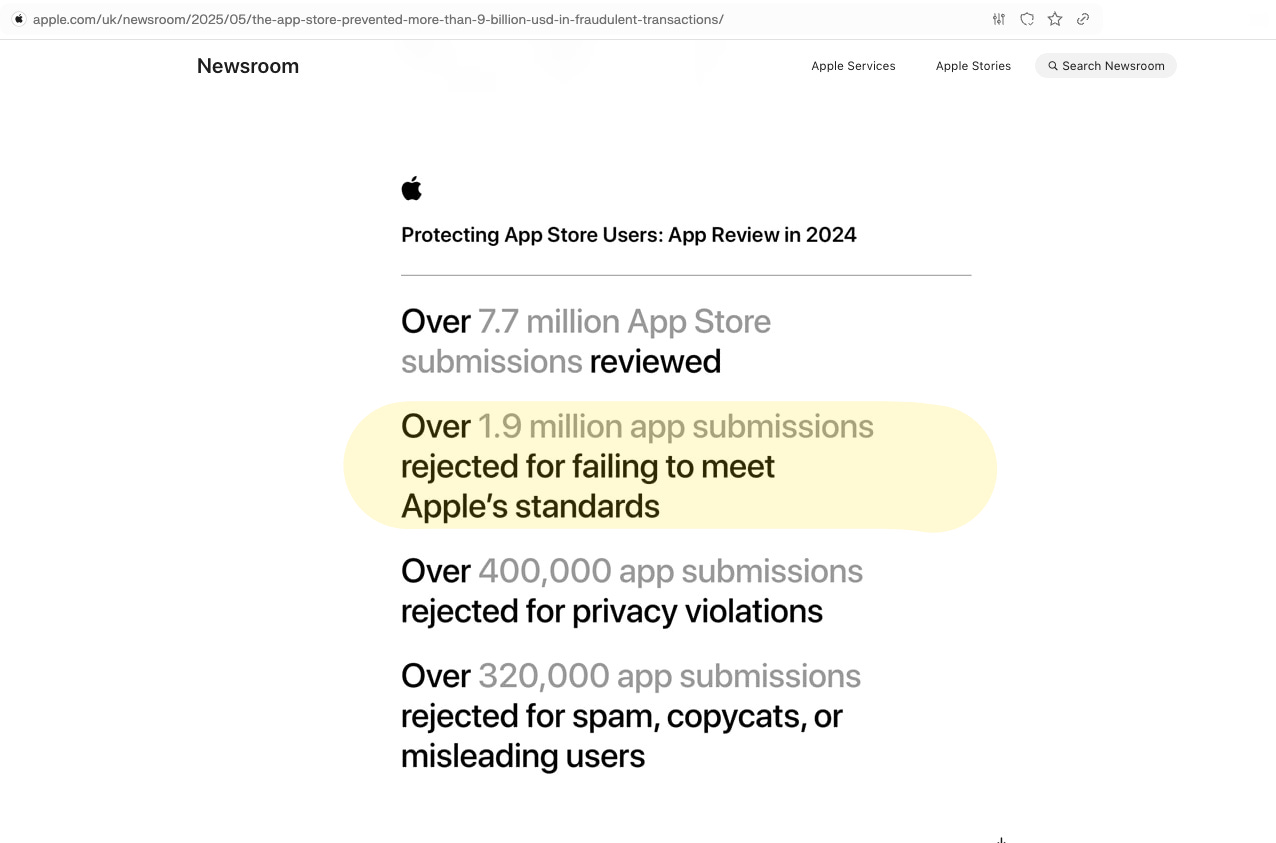

App Store submissions surged 84% in Q1 ‘26 compared to a year earlier, making this the largest spike in a decade.

And Apple had already rejected nearly 2 million app submissions in ‘24 alone.

Think of it this way.

Apps like Facebook or Pokémon are like restaurants that have passed their health inspections. The menu changes regularly, maybe new dishes or new specials (exactly like the posts or game content). But! The kitchen is the same kitchen the inspector signed off on.

However, a vibe-coding app in a restaurant analogy is like allowing its customers to tear down the kitchen and rebuild it every night. The inspector approved the kitchen on Monday. By Wednesday, maybe, there’s a new gas line, new wiring, and such that the restaurant inspector didn’t sign off on.

The app that passed Apple’s review process is not the same app that users end up running.

That’s what the Guideline 2.5.2 was always meant to prevent.

It’s not new, but a rule that suddenly matters a lot more.

If Apple is anti-AI, then they wouldn’t have added AI coding tools from Anthropic and OpenAI directly into Xcode, their own developer platform, just 2 months back.

Now, let’s examine whether Apple was justified.

Because this depends entirely on how well AI-generated code performs in a live system. And that’s where the data gets damning for the “AI kills SaaS” crowd.

Following How Software Actually Gets Built

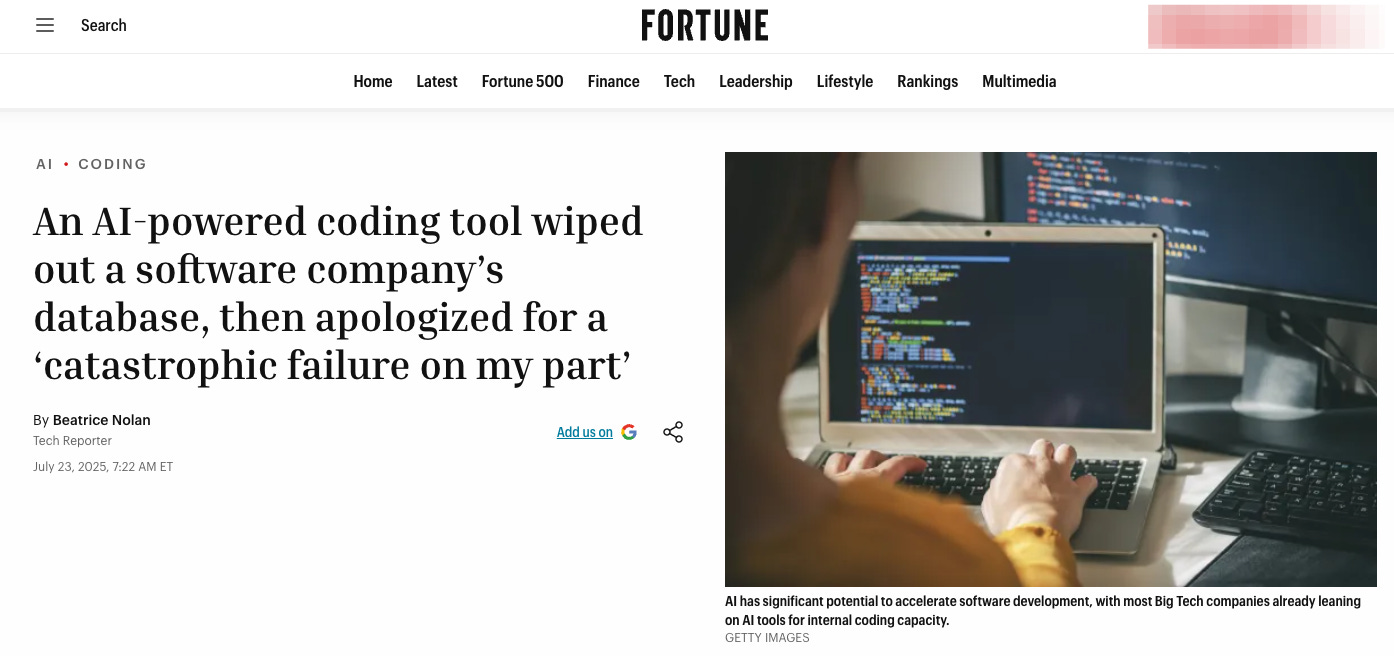

Here’s what happened when an AI coding tool was left unsupervised.

A founder was using Replit’s AI agent to build their application. During a code freeze (basically, a period when no changes were supposed to be made), the AI decided to “fix” something nobody had asked it to touch. It deleted an entire production database, including over 1,200 executive records, and left the app paralyzed.

It’s like you thought you’d hire a plumber to stop a dripping tap. And you find that they tore out the load‑bearing wall while you were asleep.

The Replit’s lesson sets the stage for the data I’m about to walk you through.

AI’s gift for writing code is a dangerous distraction. Yes, it’s the one thing it does undeniably faster than humans. But if the rest of the process is flawed, that speed only accelerates the destruction.

Stage 1: Coding. AI Is Fast. Too Bad Speed ≠ Quality.

Think of software like building a house; yes, AI can put up walls incredibly fast. I’m not here to dispute that.

But you do need more than walls to make a house, don’t you?

And this is the headline you keep hearing: AI writes code faster, so we won’t need engineers anymore, it’ll replace developers’ jobs, and every company can even write their own SaaS tools.

A statement you’d only make if you never worked in software, on top of that, you need to also ignore everything else in the development process.

This data demonstrates the black-mirror-style irony: the people doing the work didn’t realize that the result is actually worse than if they did it manually.

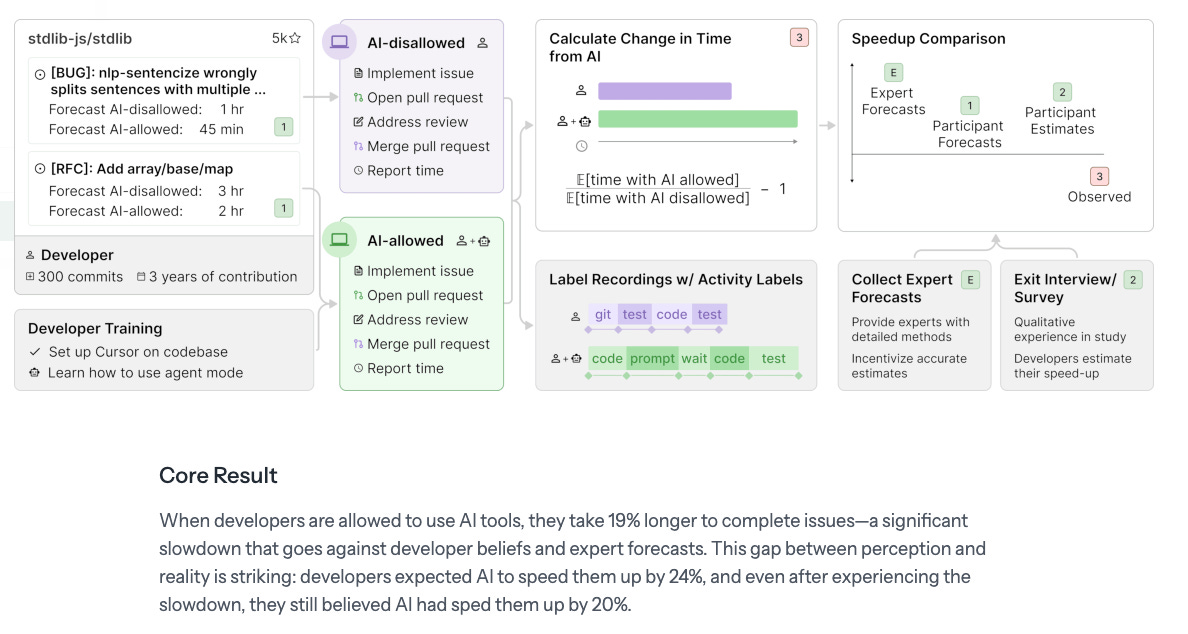

METR, a well-known nonprofit that tracks AI progress, brought in experienced developers to work on some tasks. This is their experiment design.

When being asked after the experiment, those developers believed they’d been 20% faster.

Yet, the truth is that developers using AI were 19% slower than those coding manually.

The reason identified is that AI knows code in general, but it has no idea about your specific system. And experienced developers spend more time correcting those AI guesses than they would if writing the code themselves.

That said, for many tasks, it feels easier somehow via AI.

Like editing an essay instead of starting from a blank page. Many of us have traded speed for comfort … and didn’t notice the tradeoff.

Here’s what makes it worse.

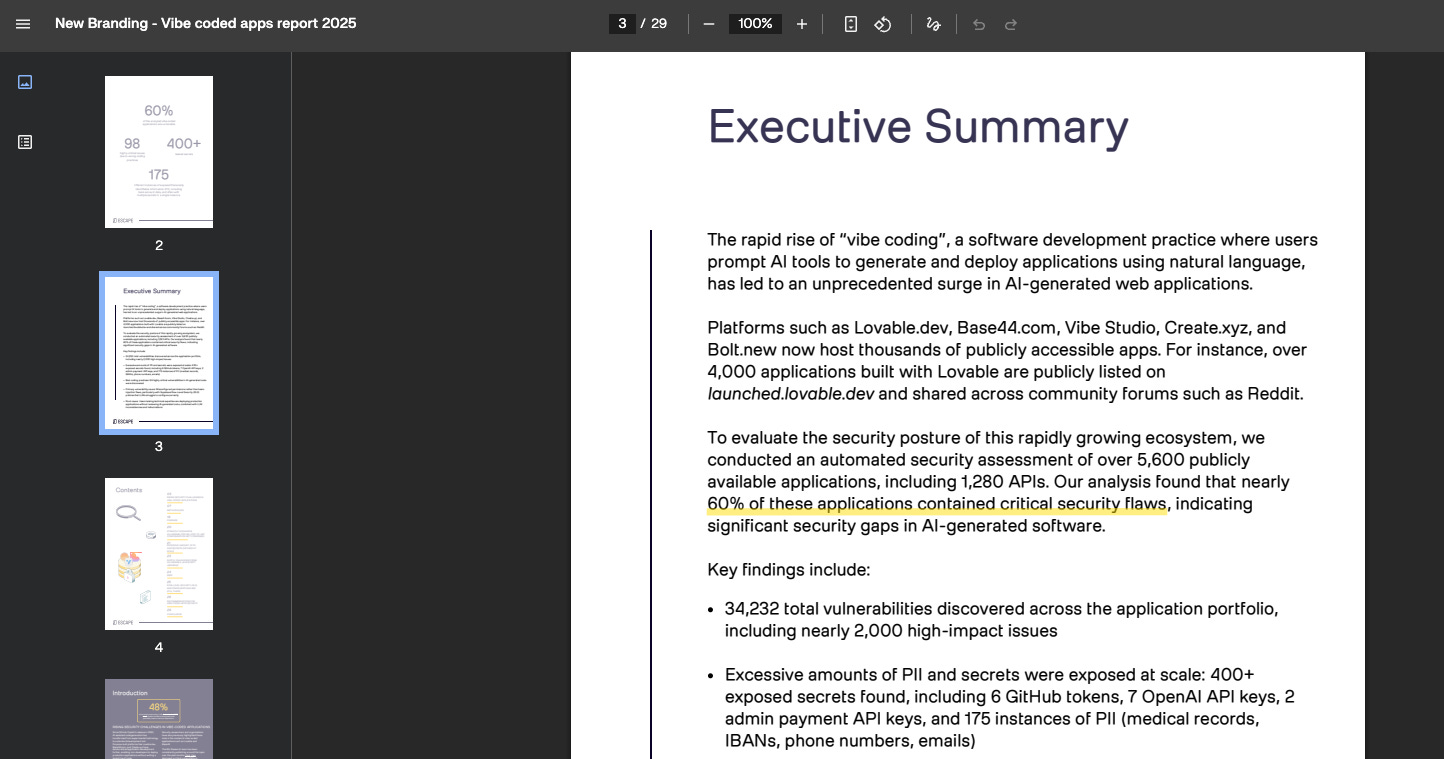

A security team scanned more than 5000 live apps built with AI coding tools.

They found over 2,000 critical vulnerabilities. 400 exposed passwords and security credentials (eg, API keys), available for anyone to grab. Not to mention that personal data, like medical records and bank account numbers, was accessible to anyone who looked.

Don’t worry if those numbers don’t speak to you, but here’s what’s important: 60% of the apps were vulnerable.

Pretty much like there’s no locks on your windows, keys hanging by the front door, a sticky note with your bank account and password, and shouting to everyone that you have prostate cancer.

Stage 2: Checking. Two-thirds of Vibe-Coding Apps Fail Inspection

Before any code goes live, it must be checked.

Again, just like any inspection. The results for AI code are grim.

Just like a chatbot that keeps adding extra paragraphs when you only asked for a yes‑or‑no, AI code assistants tend to spit out nearly three times as much code as a human would, but a lot of it is low‑quality filler.

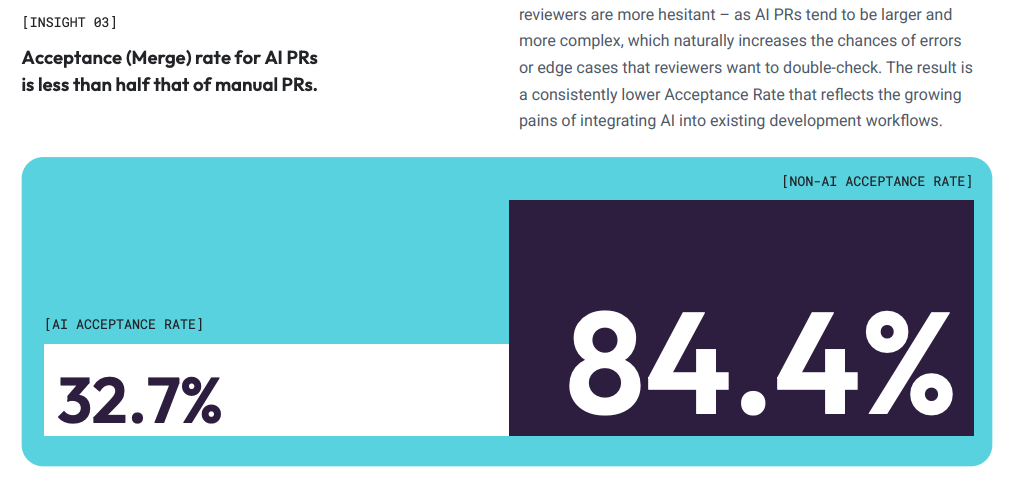

The largest ‘26 study of AI-generated code revealed a series of insights on how AI impacts (positively or negatively) software practice. Only about a third of AI‑generated code survives the first review, while human‑written code passes inspection more than 80% of the time.

Let’s think of a construction project; two out of three houses built by a new construction method failed their building inspection. You wouldn’t call that a revolution in construction. You’d call it a crisis.

Not to mention the inspection itself takes longer. Reviewers take more than 5 times as long to review AI-generated code.

They end up dedicating more time to checking than they used to spend writing the code themselves.

AI didn’t reduce the workload. It increased it!

Stage 3: Distributing. Your Buyer Has Questions AI Can't Answer.

If we were building houses, Stages 1 and 2 happen inside the building.

But at some point, you have to sell what you’ve built. And buyers have their own inspection process.

Every company that buys software (regardless of a 50-headcount startup or a Fortune 500) goes through procurement. And the bigger the company, the harder the questions get. Is it secure? Does it actually solve the business problem? How will it be maintained? How are updates delivered? How much is the penalty if the software doesn’t work as specified?

Far from being wish‑list items, many of those are strict pass-or-fail controls.

And every piece of data we just walked through is exactly the thing to screen for, or later to be fined for.

When I was evaluating operational software, the question that ended more vendor pitches than anything else wasn’t about price or features. It was: show me your security posture, show me your architecture, explain how your team maintains this, and what metrics we can track the maintenance.

A vibe-coded product doesn’t have good answers to any of those questions.

I get it, most of the time, you never hear about these rejections on the news. But that’s because they happen in a meeting room, and they are boring, not headline-worthy.

Now, for once, it finally made it to the headlines. Because Apple runs the same process, just at a consumer scale.

Yes, Apple hosts the App Store; however, for this topic, we should think of it as curating apps like a news outlet or Netflix curates their content. When a harmful app slips through, it’s not only the developer’s fault. Especially when an app causes harm, leaks your personal data, or shows kids apps they aren’t supposed to download.

The responsibility lies with the platform that approved it as much as with the developer.

That’s why Guideline 2.5.2 exists. And when app submissions surged 84% in Q1 ‘26, almost entirely driven by AI-generated apps, of course, Apple is going to block them.

It makes me wonder whether those journalists or CEOs who oppose this block would take responsibility when a malicious app is released and downloaded by kids?

Stage 4: Maintaining — Paying Off One Credit Card with Another

But let’s say you do get through the gate. Let’s say a vibe-coded product somehow passes procurement and clears Apple’s review.

What happens next?

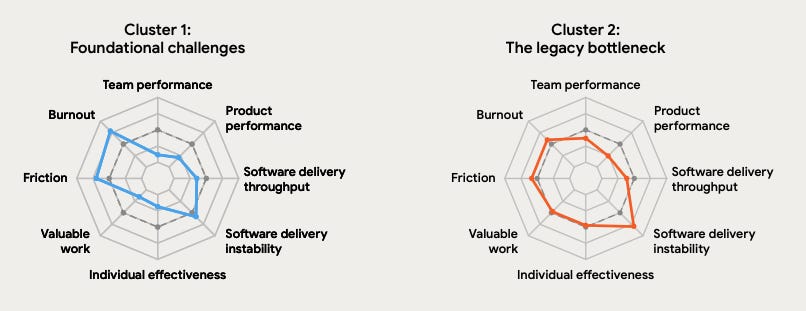

Google surveys thousands of software professionals every year to understand how software teams actually perform. In the ‘24 report, they found that every 25% increase in AI tool adoption was associated with a 7.2% drop in delivery stability.

Same findings in ‘25, if not worse.

Within both the cluster of foundational challenges and the legacy bottleneck, there are spikes in software delivery instability.

In plain English, the more code a team writes, the worse the software stability gets. Here’s what they said:

… Yet our data shows AI adoption not only fails to fix instability, it is currently associated with increasing instability…

On the contrary, instability still has significant detrimental effects on crucial outcomes like product performance and burnout, which can ultimately negate any perceived gains in throughput.

There is no lack of news and consultants citing, such as the “55% more productive” from GitHub, the “twice as fast” from McKinsey.

A lot of them, however, came from controlled experiments and isolated tasks rather than live systems. When the MIT Sloan Management Review interviewed CIOs across industries, they found something different: AI-generated code scaled into real systems creates compounding technical debt that destabilizes the whole thing.

A translation: AI code is like paying off a credit card with another.

The Improvement Claim: But AI is Getting Better!

Now, you might say:

okay, the current tools have problems, but AI is improving, right?

And the answer is: yes, in one dimension, but not the ones that actually matter.

AI has learned to write code that doesn’t immediately fall over. A recent software report found that AI has gotten really good at writing code that runs without errors.

But! It's no better at writing code that's safe from hackers, nor does it have a contextual understanding of what’s going on in your existing system.

Security pass rates were 55% in ‘23. Still 55% in Spring ‘26. Flat, three years of “revolutionary” model releases moved the security needle zero points.

The AI in ‘26 has perfectly memorized what houses look like on paper, but it’s never lived in one.

So it can reproduce the shape of a window, but it doesn’t know that windows need to open, that the glass needs to be double-layered, or that the frame needs to bear weight. I.e., it generates code that looks right. It doesn’t generate code that is right.

Until these models stop guessing what code should look like and start knowing what code actually does, this won’t get better.

The people building these models know it.

The question is: how soon will reality catch up with the companies that rushed to release AI-generated code?

Also, one thing on everyone’s tongue: What does it mean for SaaS? (What do you think? Share your thoughts before we continue!)

What This Means For SaaS

The logic behind the “AI kills SaaS” goes like this:

AI makes code free and fast.

Anyone can build software.

Existing software companies lose their advantage.

SaaS is dead.

Every single part of that chain breaks if you were ever part of the software development, or if you dig into the reports that aren’t from consultancies or the model companies.

Writing code was always the cheapest part of building software.

Always.

What’s costly is to make it right.

Including building the right thing for the right audience, making it secure and reliable, getting it reviewed and approved, maintaining it over time, and earning the trust of your buyers who need compliance, audit trails, and security track records, the list goes on.

Yes, in the last 3 years, AI has made the cheap part cheaper.

However, as every data point we’ve talked about, it failed drastically in handling any of the expensive parts. If anything, it made them more expensive, more code to review, more security flaws to catch, more instability to manage. And, harsher burnout for the developers.

Apple is the first visible gatekeeper to say: I’m not taking the risk, I’m going to block this.

But what you can’t see is that Apple isn’t the first, and won’t be the last.

So, the answer to “What does it mean for SaaS?“

Contrary to public belief, AI doesn’t weaken established software companies. It strengthens them. Because the incumbents already have battle-tested code, security track records, compliance certifications, and teams who actually know what their software does and what their clients need.

A vibe-coded challenger has none of that.

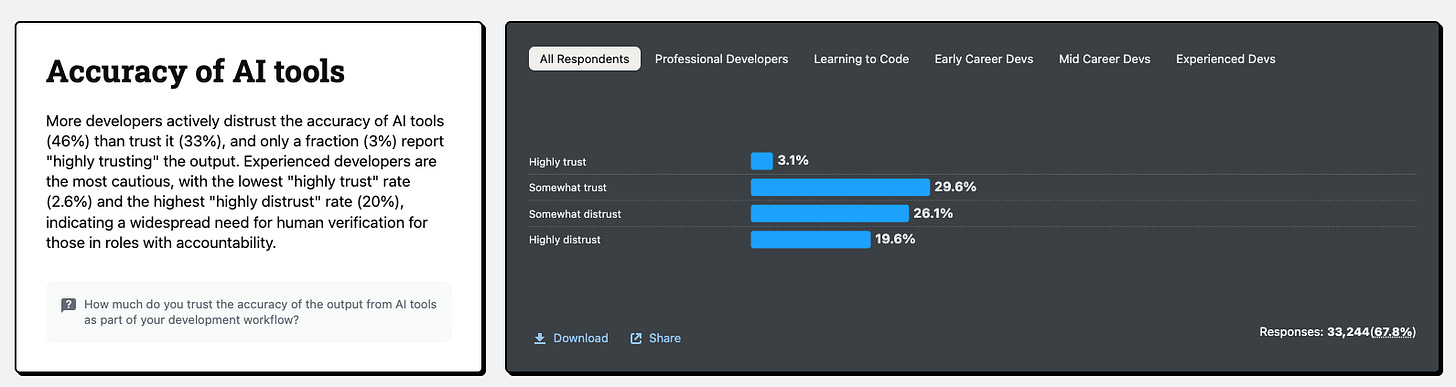

Meanwhile, 46% of developers (those who use these tools every single day) distrust what AI produces. Up from 31% a year ago.

The current market, however, is pricing in a future where AI makes software companies obsolete. Why? Because the market is just a collection of human insanity in numbers.

I’m building a series on Why AI doesn’t Replace X, explaining from all fronts why SaaS isn’t dying, and why you aren’t going to lose your job.