The worst way to prepare for the AI era might be learning AI tools.

Because the tool changes. The prompt trick dies. The workflow gets absorbed into the next update.

And then you do it again.

Believing that every try (the subscription, the re-rolling, and tweaking), you are one step closer to the guru’s pitch of 10x efficiency.

Only nobody calls it what it is: a nice and slow burnout with better branding.

I read the two latest studies and can confidently tell you that if using AI has somehow made you feel busier, not freer (as promised), you are not imagining it, and you aren’t alone.

The promise was that AI’d save us time. But for many, it has created a second job: checking, fixing, comparing, and trying not to fall behind.

So I’ll show you what the studies found, my own burnout story, and why learning AI tools might be the least useful way to prepare yourself for what’s coming next.

AI Brain Fry is Real

BCG found that AI seems to help when it replaces boring, repetitive work; people who use AI this way reported lower burnout.

However, when AI turns you into the manager of the machine, checking outputs, switching between tools, correcting mistakes, and so on… all of which is where the brain fry starts.

Workers doing high AI oversight reported 12% more mental fatigue and 19% more information overload.

The AI gurus show you the best possible output but never discuss the hidden costs of AI, extra time to manage, and oversight.

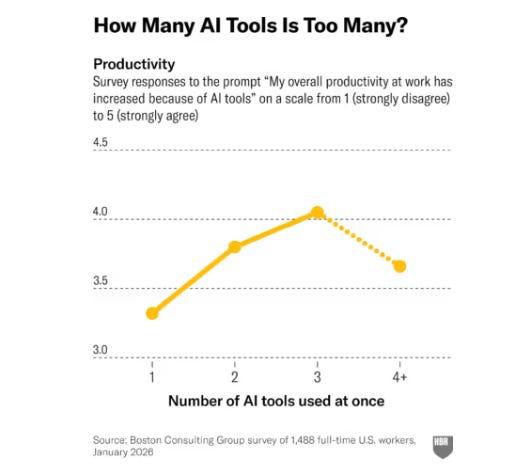

The chart makes the trap obvious.

The productivity drops sharply when people use too many AI tools. One tool feels useful. Two feel powerful. But by the fourth, you’re no longer working faster. You’re only middle-managing software.

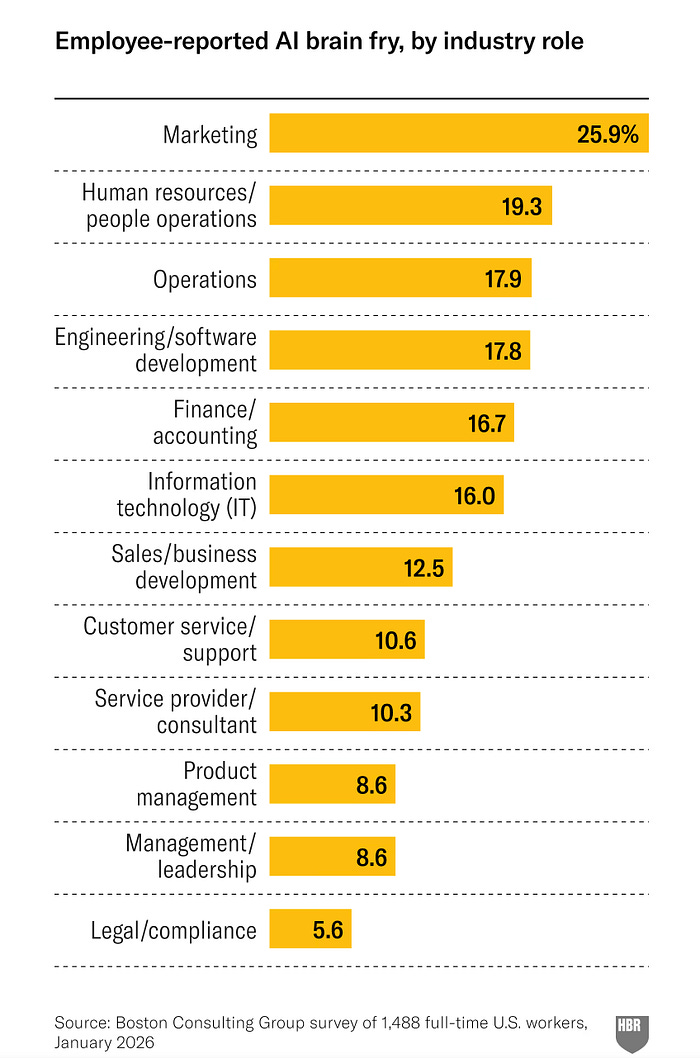

Another finding that stood out was marketing. Marketing professionals, surprisingly, were the most exposed to AI brain fry.

And that makes sense, because the vaguer the work, the easier it is to get trapped in a forever re-rolling battle with AI. Code either runs or it doesn’t. A calculation is either right or wrong.

But a headline, a campaign, a thumbnail? That can always be a little better, so the re-rolling gets addictive and overwhelming.

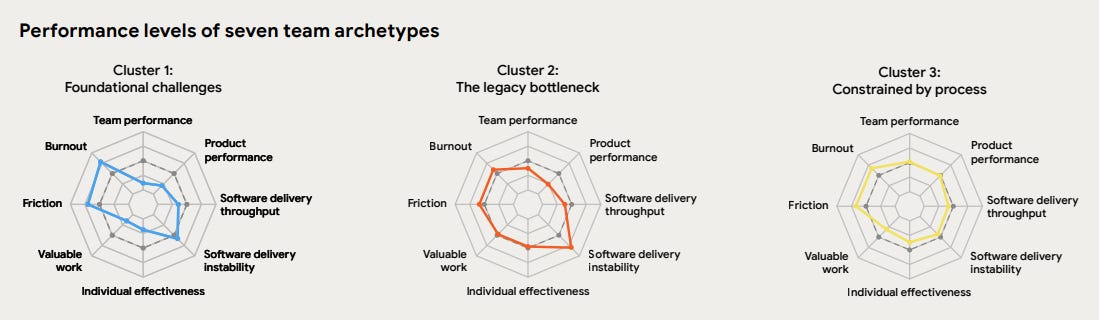

Google Dora’s report shows the same problem from another angle.

It surveyed nearly 5,000 enginers. While they found that more than 80% said AI increased their productivity. It also shows that AI doesn’t remove work evenly.

Some archetypes are worse than others.

Teams stuck with unstable systems, reactive work, and high-friction processes see high burnout. Same for those constrained by process, because managing the workflow is exhausting.

On the flip side, teams with low friction and stable foundations had much lower burnout.

Which means AI was never the whole story. It amplified the system underneath.

If your work system is already messy, AI isn’t the fairy godmother that cleans up the mess. It’d just help you produce more, which would make it messier.

And that is why the AI tools tutorials, or the claim that you’ll be replaced by those who use AI, are horrible, horrible noises.

What does the AI Slot Machine Cost

This is the part you’d recognized immediately.

Using GenAI to chase a better image or code works the same way as swiping TikTok or pulling a lever of a slot machine gets you hooked. You prompt, re-roll, tweak, re-roll.

And it doesn’t feel like wasting time, because it wears a productivity costume. But worse than the slot machine, GenAI not only costs you attention but also time and credits, which create a sunk cost when you quit.

I’ve experienced it firsthand, as many of you have.

Tools, large language models, skills, reports, you name it. Every new update, I’d check it out and couldn’t help but try it. I became obsessed, worried about what I might have missed in my analysis, and often felt out of breath during big weeks (when many things hit the market at once).

And then my body started sending warning signals, gastritis, for which a GP couldn’t find a cause. Still slowly recovering.

Now, weirdly, the more I understood AI, the less the demos and releases affected me.

Here’s how I’ve come to see it.

First, Learning How to Use AI is Pointless.

The first mistake is treating AI tools like a durable skill.

Most of what people call “learning AI” is really learning the current interface and interactions with current models/agents.

But because models update so frequently, much of that expires fast.

Not to mention, the goal of AI development is to eventually let anyone complete tasks through natural language. So any barriers to using AI will be flattened in the next iteration.

Teaching others to vibe code, or using AI to generate movies or images, is a fragile endeavor. The technique expires when the tool updates. What doesn’t expire is the eye you build from doing the work yourself: seeing where the code is brittle, the design feels cheap, an argument sounds clever but full of fluff.

Those are the things that equip you to best assess whatever comes next.

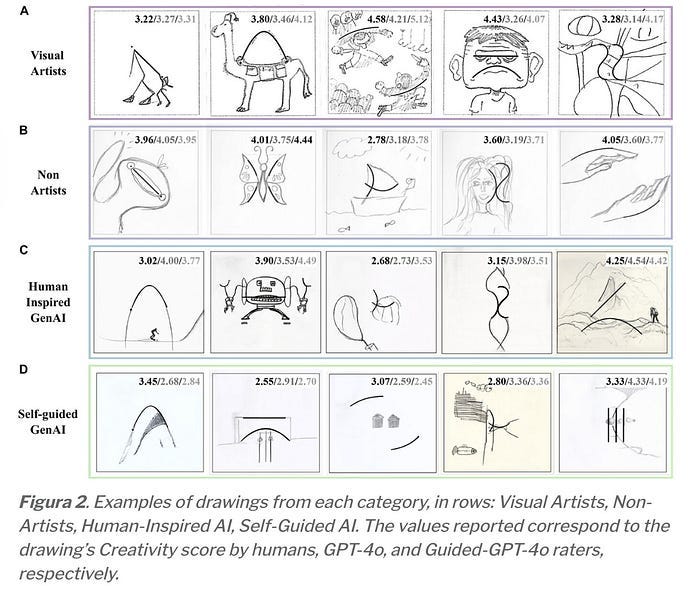

Researchers tested this not long ago. They trained a model on visual artists’ own drawings, then had both the AI and the artist produce work again. The artists still won on originality and aesthetics.

The model had their style. It didn’t have their perception.

With this in mind, there’s no point in just learning the ways of manipulating GenAI, as long as you have the judgment of what it can and can’t do (which isn’t a simple one).

So when I have an extra hour, I’d rather spend it improving the work itself. Reading or challenging my own thinking, and writing down the argument without asking a model to rescue me all the time.

Because that is what gives us the eye.

Second: Using AI to do Your Work is Also Pointless.

If you are an early adopter like me, simply think back to what GPTs you chased in 2023, or the OpenClaw you lost sleep over.

Setting up folders, rules, skills, and you finally get a workflow running that produces the output somewhat close to what you’ve expected.

But there’s always something off. The research isn’t done right, and the output quality varies.

Then a new model update arrives, and suddenly all the setup gets absorbed into the product.

The most value you provided, at best, was teaching the model companies what serious users wanted.

And before you’ve even had a chance to enjoy the edge of a finely tuned AI workflow that was given to you, that gap between you and everyone else gets wiped out overnight.

Just imagine this: a colleague who never bothers researching OpenClaw or Hermes (no, not the bag), whose AI knowledge extends to occasionally reading a headline, plodding along doing their work w/o trying to automate every single task.

When a new model comes along. The colleague could now achieve the workflow you’ve spent a weekend on with a single-line prompt.

From the outside, the return is poor, and the time saved amounts to nothing distinguishable.

The Only AI Engagement Makes Sense

There is one version of engaging with AI that’s worth something, and it starts before the tool.

My 10 years of product experience taught me that people love rushing to a solution, regardless of a dashboard, a feature, or a workflow, because they feel empowered.

The useful question, however, should come much earlier. Asking, what is actually broken? And what do I want to achieve?

Here’s an example: most people find it valuable to brainstorm with AI.

In fact, brainstorming is arguably the worst thing you can do with AI.

More often than not, you hold the key to a much better answer than a model would.

Because every idea AI generates is already everywhere on the internet. It gives you the most statistically likely version of an idea. That’s the last thing you need when you’re looking for an angle nobody’s taken. I reviewed a study on this.

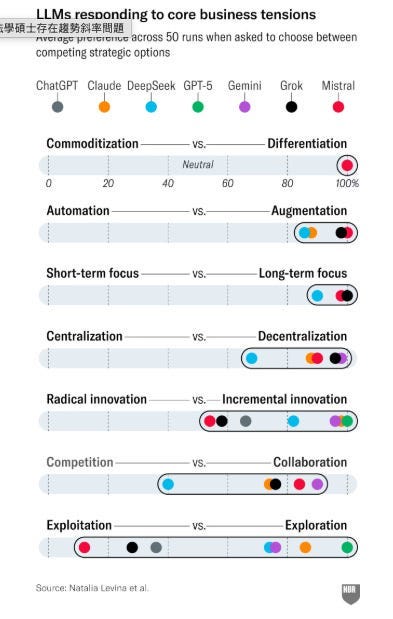

This latest study used seven major LLMs — GPT5, Claude, DeepSeek, Gemini, and more — and tested thousands of business strategic questions across industries.

The results were remarkably similar across models: augmentation, think long-term, innovate, and decentralize. As you can see in the image below:

You don’t need 15,000 trials (as the researchers did) to see it. You just need to notice when you’re getting back the internet’s average opinion.

It doesn’t require you to become an expert using AI, but an expert in your own domain, and of knowing what you want.

So, the only AI engagement that earns its place looks like this:

Try AI when it’s new enough to matter, learn the actual trade-offs,

Then decide deliberately whether it belongs in your work. That’s different from chasing every release. It’s also considerably cheaper on your nervous system and your wallet.

Prompt less, think more.

When the AI’s Wild-Goose Chase Is Over

The models will keep improving. The releases won’t stop.

But I kept noticing something: AI made me faster at producing things; what it couldn’t do was tell me which things were worth producing.

And I often end up throwing away most of what AI produces.

Which is why the most important thing is our judgment. Which is also always the bottleneck, and the ceiling that the next release won’t close.

Not because the AI companies aren’t trying to replace judgment, believe me, this is all they’ve wanted.

But it’s less of an engineering problem and more of a human one.

As I explained in my work, distilling your colleague.

Once you see this, the new releases stop landing the same way.

This can be a cliché, but the question was never which tool is fastest. It was always what you’re trying to do with it.