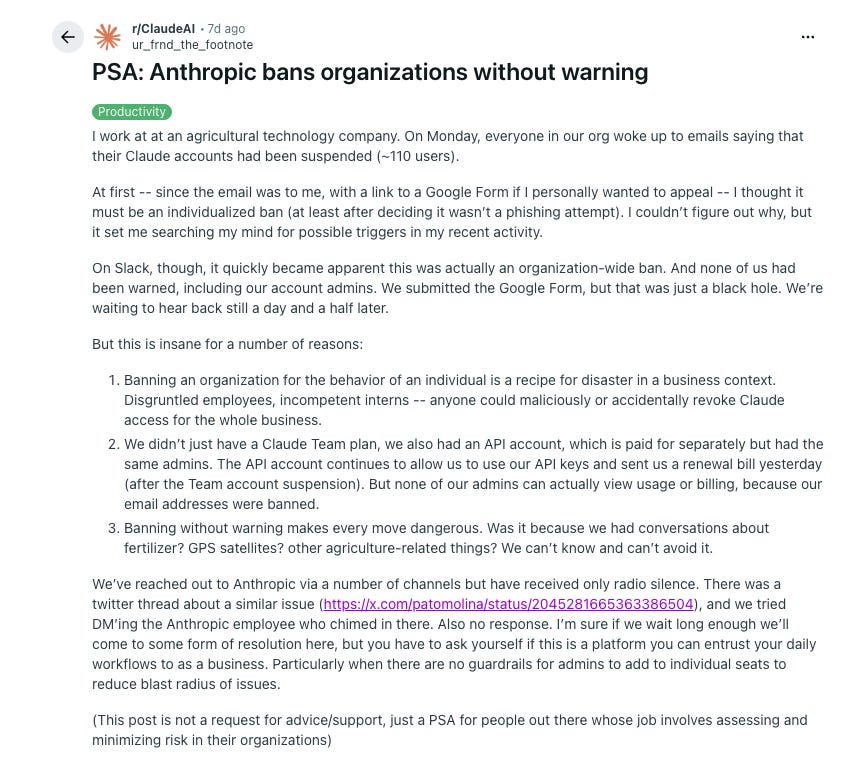

Anthropic suspended an entire 110-person company’s Claude accounts — all at once, on a Monday morning, without warning.

While the team was locked out, their API keys kept billing. They couldn’t view their own usage data because their email addresses had been banned.

A second company hit the same pattern the same month.

I studied both incidents against Anthropic’s commercial terms. I found four structural reasons that make this failure repeatable by anyone, and all of them are written into the terms every Claude API customer has already signed.

At the end of this, you’ll also learn the three most practical ways to manage the risk, recommended by a whole community of CTOs.

But first, the incident. Because the part that should concern you isn't just the suspension, but what happened the moment the account was locked.

The incident

Monday started like any ordinary workday for this ~110-staff company.

The first signs of trouble appeared in the operations channel on their Slack. One person posted a screenshot showing that they have trouble accessing Claude. Then another. Within ten minutes, people across the company were asking the same question:

What happened to my Claude account?

The answer surfaced quickly. It was not just one person’s Claude account. Everyone’s Claude account had been suspended.

The company is a U.S.-based agricultural tech business.

The OP says the company used both Claude Team and a separately billed API account, suggesting Claude was used not only through individual/team seats but also through API-based workflows. The OP describes Claude as part of the company’s “daily workflows” and mentions agriculture-related conversations involving fertilizer, GPS satellites, and other ag-related topics.

Then Anthropic cut everything off.

Just like that, 110 accounts were suspended at the same time. No warning. Anthropic didn’t send the company a notice, but 110 separate ones.

So at first, same as everyone else, the OP thought it must be something he’d done.

The email was addressed to him, and the link went to a Google Form for individual appeal. He started searching his own recent activity for a trigger. Was it the conversations about fertilizer? GPS satellites? Agriculture-related topics that might have looked wrong to an automated system?

He had no way to know.

It wasn’t until people started comparing notes on Slack that the picture became clear: the entire organization had been blocked at once. A single mistaken trigger, whether from a human or a bot, could put every account in the company into the dark.

They submitted the Google Form. Then reached out through every other channel they could find — including DM-ing an Anthropic employee who’d commented on a similar case on Twitter.

No reply after twelve hours.

Twenty-four hours passed, no reply.

Thirty-six hours passed, radio silence.

Then came the absurd part.

Remember? The company didn’t have only a Claude Team plan; it also had a separately billed API account under the same administrators. The API keys kept working, and a renewal bill arrived.

But because the admins’ email addresses had been banned, they couldn’t log in to view usage or dispute the charge!

By the time the post hit Reddit, access still hadn’t been restored.

What’s concerning is not only the suspension itself, but this pattern of:

automated enforcement,

vague email,

generic appeal route,

and no immediate business support when the system gets something wrong.

And most importantly, that this wasn’t a one-off event.

Another company had already been hit in much the same way.

Not long before, Pato Molina, a CTO of a fintech company, publicly on X that Anthropic had taken down his entire organization, 60-plus accounts, without explanation. He shared the email Anthropic had sent:

Our automated systems detected a high volume of signals associated with your account which violate our Usage Policy... As a result, we have revoked your access to Claude.

So they emphasized that a human reviewed the decision and agreed with the machine. Only later did Anthropic confirm it was a false positive. Access was restored.

The full explanation

We apologize for any inconvenience.

Sixty people were locked out. Integrations, conversation histories, and the internal processes their teams ran on are all either gone or on indefinite hold.

It’s hard to believe that these two companies had slipped through the cracks of Anthropic’s enterprise support.

They are likely on the same standard plans that anyone clicks through when setting up Claude Team or an API account.

If this happens to your team, you’d receive the same email, the same form, and the same silent treatment.

Which raised an obvious question:

what does the standard deal actually say?

It turns out Anthropic gave these companies almost nothing to begin with, and not for the reason you'd expect.

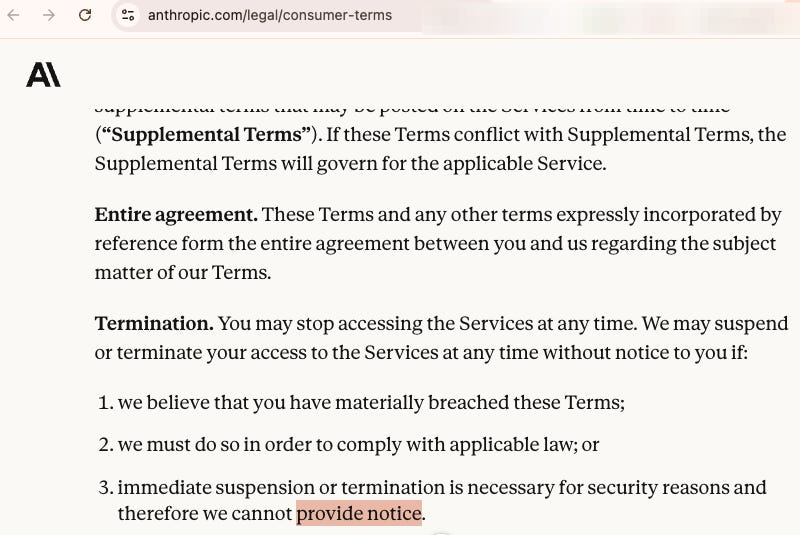

What the policy actually says

Of course, I went down the rabbit hole. I read the terms.

The terms are actually more protective than you’d expect from reading the evidence by those two companies.

For individual users on Claude.ai, Anthropic can terminate your access

we believe that you have materially breached these Terms;

we must do so in order to comply with applicable law; or

immediate suspension or termination is necessary for security reasons and therefore we cannot provide notice.

Materially, in contract law, that means serious enough to justify ending the relationship. A grey area doesn’t get you there.

The Commercial Terms go further. Anthropic may suspend a customer

if Anthropic reasonably believes or determines that... Customer or any User is using the Services in violation of Sections D.1, D.2 or D.4.

These are respectively:

Follow the law.

Follow Anthropic's policies.

Don’t compete with Anthropic.

Repeat: “Don’t compete with Anthropic, “ is very important to keep in mind; we will discuss it in my future work.

Then comes the liability clause: Section I.3.b:

Anthropic will have no liability for any damage, liabilities, losses (including any loss of data or profits), or any other consequences that Customer may incur because of a Service Suspension.

So if the companies have ever bothered to read these commercial terms, you will realize that they can suspend your entire organization, keep billing your API account, refuse to respond for x hours/days, and owe you nothing.

It’s all documented, which these two companies (and you did too) agreed to when you clicked accept.

Not to mention these two firms are too small for Anthropic to care about. That’s why they wouldn't have a tailored SLA, nor an account manager, nor a dedicated escalation path that didn’t go through a form.

Four structural failures

So let’s be precise about what actually failed here, because the story isn’t simply that Anthropic made a bad call.

Failure #1: No granularity.

When Anthropic’s automated detection flags a violation, the consequence is binary. The whole organization goes dark; it’s either all or zero.

If there’s ever a careless colleague, an automated system misreads a conversation about fertilizer as bomb ingredients. That’s it, Anthropic doesn’t care if the AI is used for your internal team only or also to serve your customers.

Failure #2: No enterprise escalation path.

The appeal process for a 110-person business is a Google Form, with no priority queue. These accounts are treated exactly the same as individual users.

It says a lot about how Anthropic sees its real customers: enterprises with large orders. At Anthropic’s burn rate, it only matters if your entire year’s spend registers more significantly than a rounding error on their revenue sheet.

Failure #3: No liability.

As we talked about, Anthropic’s terms have eliminated the liability. Which means, any potential revenue lost while your team was locked out; I hate to disclose it to you, but it is not their problem.

It's worth asking why, or say, worth reconsidering if the loss is something you can bear.

It’s simply math about a lack of incentive.

A company that carries liability for mistakes has a financial reason to prevent them.

Anthropic doesn't carry that liability.

So apart from them losing you as a customer, and getting some tiny stains on their reputation (Which AI companies are well-reputed anyways?). It cost them no actual harm, since none of it registers on their balance sheet.

You're absorbing the cost of whatever mistakes, either by you being careless, or them, whatever the reasons are.

Failure #4: No human in the loop.

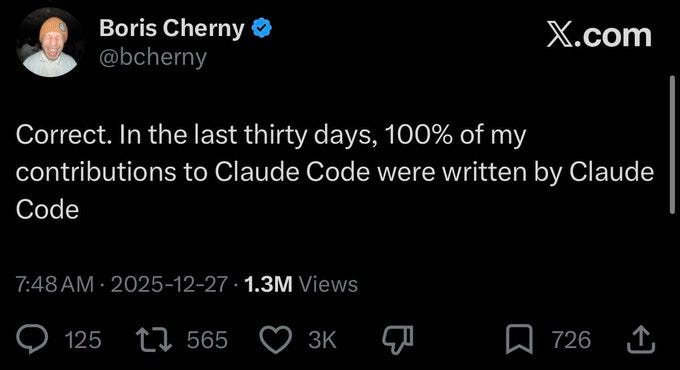

After the Claude Code source leak in March 2026, Boris Cherny, Anthropic’s lead engineer on Claude Code, first admitted that

100% of my contributions to Claude Code were written by Claude Code.

And then he acknowledged the packaging error that caused the leak, and said:

The counter-intuitive answer is to solve the problem by finding ways to go faster, rather than introducing more process.

On top of that, a public Claude issue tracker found that somewhere between 49%- 71% of all issue closures were automated.

So in plain words, the product writes, monitors, and fixes itself.

When something breaks, the answer is to remove more humans, not add them, because they trust the bot to fix the crack.

This mindset could get away if they run this as a small-scale experiment, but no, the comment was right after a major leak of their flagship product. The engineer’s public response wasn’t “we need better review,” instead, “we need to move faster and let AI check AI’s work.”

If this is how they respond to a serious breach, I wouldn't be surprised if people’s accounts got randomly suspended. Because there was no investigation, from triggering the ban to reviewing it and lifting it, everything was done by bots.

Now you may say,

fine, I’ll just switch back to OpenAI or to use Gemini.

But is it that simple? Maybe, perhaps, this is a standard AI company’s playbook all along?

Claude vs. OpenAI vs. Google, Which?

I pulled the equivalent suspension terms for OpenAI and Google. And here’s the short version.

While Google’s Gemini API terms didn’t mention suspension, this is written on their general API page:

Google may suspend access to the APIs by you or your API Client without notice if we reasonably believe that you are in violation of the Terms.

OpenAI’s Business Terms are materially better on one specific point (surprisingly):

If an End User: (a) violates the Agreement; or (b) causes, or will cause, a Security Emergency, then OpenAI may request that Customer suspend or terminate the relevant End User account. If Customer fails to promptly suspend or terminate the End User account, then OpenAI may do so

As you can see, they are all in the same direction in the guidance of account suspension.

So you’re tied up, and no alternative?

Not quite. Here is something real and concrete for you.

So, are you kidnapped by AI companies?

Yes, the speed at which businesses are embedding AI tools is outrunning anyone’s procurement or ISO checklist.

I ran this past a few CTO groups and received some interesting comments and advice.

Just dumping those I believe makes sense here. Some are just good practice, others are unique to this new world where a single vendor has such an outsized impact on your organization.

Counter play #1: design for unreliability.

The full rug pull is the extreme version, but Claude’s status page has lit up like a Christmas tree lately. If you can not do your job without Claude being available, consider how much money downtime costs you. Now you have a budget to figure out alternatives.

One of the obvious alternatives is self-hosting models.

We are getting to a point where hosting on AWS or Azure, or even a top-line Mac, is feasible. It’s not the frontier model level performance, but for many agent-ish tasks, it’s plenty powerful. More control, better availability, and less token budget worries if you’re running on your own hardware. If this is so critical to your organization, the cost of this control might be worth it.

Counter play #2: limit the blast radius.

We can’t avoid talking about blast radius when it comes to agents.

Blast radius is the impact on your organization when something goes wrong. One agent who handles your entire workflow has a much larger individual blast radius compared to 10 agents completing that same workflow. In fact, there’s a very good chance that of those 10 agents, 8 are better off with a standard bit of script, and there are only 2 steps in there that need LLM access.

Counter play #3: Keep data separated

And finally, agents, of course, need data.

Without getting on my data privacy and sovereignty soap box, it’s worth considering where your data is stored and how it’s accessed. Having files uploaded to Claude is miles harder to untangle than having access to a directory on your computer.

Klaas is a veteran CTO; he helped me complete this one by bringing a practical CTO perspective.

If you run a company with $15M ARR and above, don’t be shy, reach out and ask him:

For what we’re trying to achieve in the company, where are the biggest AI gains and risks?