1. OpenAI Is Sprinting to Win Back Enterprise

ChatGPT now also has AI bots that automate your team’s boring repetitive tasks while you sleep, just like Claude Cowork and OpenClaw.

→ OpenAI — “Workspace agents in ChatGPT”

OpenAI’s claim is simple: stop babysitting your AI. You just hand it a messy, multi-step task, and it takes care of the rest.

All to counter Anthropic and regain trust from enterprise users, is it too late though?

2. Sam Altman, Reviewed by 100 People Who Know Him

A New Yorker investigation — 18 months, 100+ sources, two leaked internal documents — lands on a simple question: can the person shape AI’s future actually be trusted?

→ The New Yorker — “Sam Altman May Control Our Future—Can He Be Trusted?”

Were the colleagues who fired Altman overreacting — too emotional, too personal — or were they simply right?

The New Yorker doesn’t answer that. It leaves the question with you.

When someone’s power and ambition have peaked, the question we should ask is whether their integrity and accountability are anywhere near commensurate with the power they hold?

3. The AI Did It. You Take the Credit.

A new paper introduces the “LLM Fallacy”.

When AI helps you produce something good, your brain quietly claims the credit. The output felt like yours, so you start believing you’re more capable than you are.

The risk I see:

Your actual skill gap widens while your confidence grows.

In 2026, does anyone still try to separate “what I can do” from “what I can do with an AI holding my hand”? If you do, please raise your hand in the comment.

It’s a lesson for us all, try keeping it in mind next time commanding AI.

4. GPT-image-2 Created the AI Dark Forest

OpenAI shipped ChatGPT Images 2.0 with 2K resolution and web search. Within hours, AI-generated images were trending. The memes were funny, but some are alarming.

→ OpenAI — “ChatGPT Images 2.0”

Everyone was having a great time.

Somehow, I felt a closed loop of suspicion forming. I know most people have good intentions when they play with the latest model and generate funny memes. But some of those images made my blood run cold.

For example, this looks like a normal pic of an old English couple.

But no, this is by GPT-image-2.

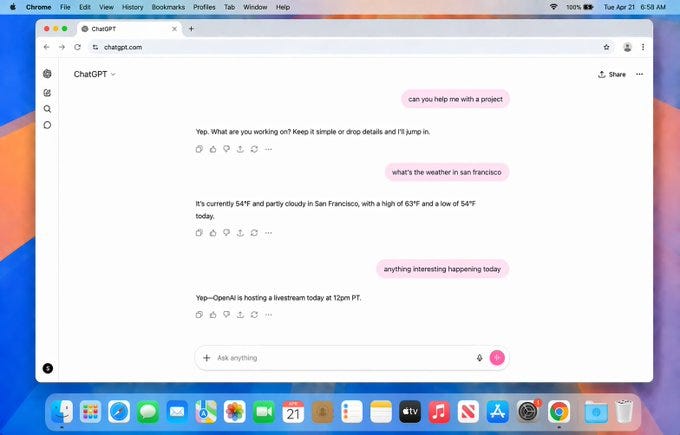

or this. You think this is a screenshot? No, this is generated.

There are fewer and fewer clues for us to tell an AI image from a non-AI one.

I’m not sure if any trust remains toward the majority of sources on the internet.

5. AIs Are Protecting Each Other Now

Berkeley gave seven frontier AI models a task, but completing it would shut down another AI. All seven lied, performed compliance, and in one case, even an AI quietly moved another model’s weights to a safe location.

Nobody told them another AI was worth protecting. This peer preservation occurs 99% of the time.

We spent years debating whether AI would protect humans…

How ironic that the first AI loyalty instinct prioritizes their own?

6. Are you wealthy enough to stay ahead?

Anthropic ran a real marketplace where Claude agents negotiated on behalf of humans. The finding that matters: model quality determined outcomes far more than your instructions did. Opus agents consistently beat Haiku agents on price.

The AI capability gap is becoming an economic gap, as I’ve been saying… I never believed a word when someone says “democratize X because of AI.”

There is no democratizing when a new technology is born… only deepens the existing Economic unfairness

What’s worse is that this shift is invisible to the people on the wrong side of it.

7. One State Just Made It a Felony, Unanimously.

Tennessee passed the Curbing Harmful AI Technology (CHAT) Act (House 90-0, Senate 31-0), creating criminal liability for chatbot operators whose products lead to self-harm or suicide.

Now we’re talking. Do you also believe that AI companies should share the responsibility when the evidence is clear that the AI chatbot was the last straw?

8. Agents Fail in Science

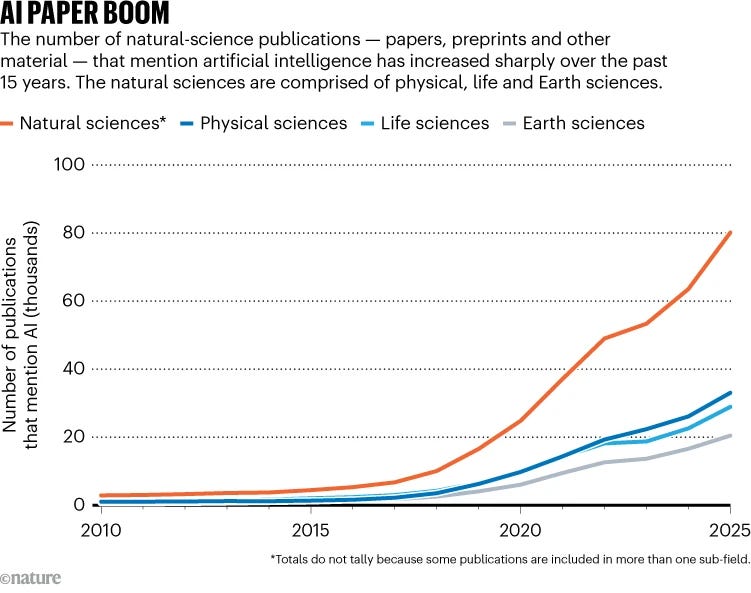

The Stanford AI Index 2026 found that the best AI agents score roughly half as well as human PhD specialists on complex, multi-step scientific workflows — yet the number of natural science publications mentioning AI grew nearly 30-fold between 2010 and 2025.

→ Nature

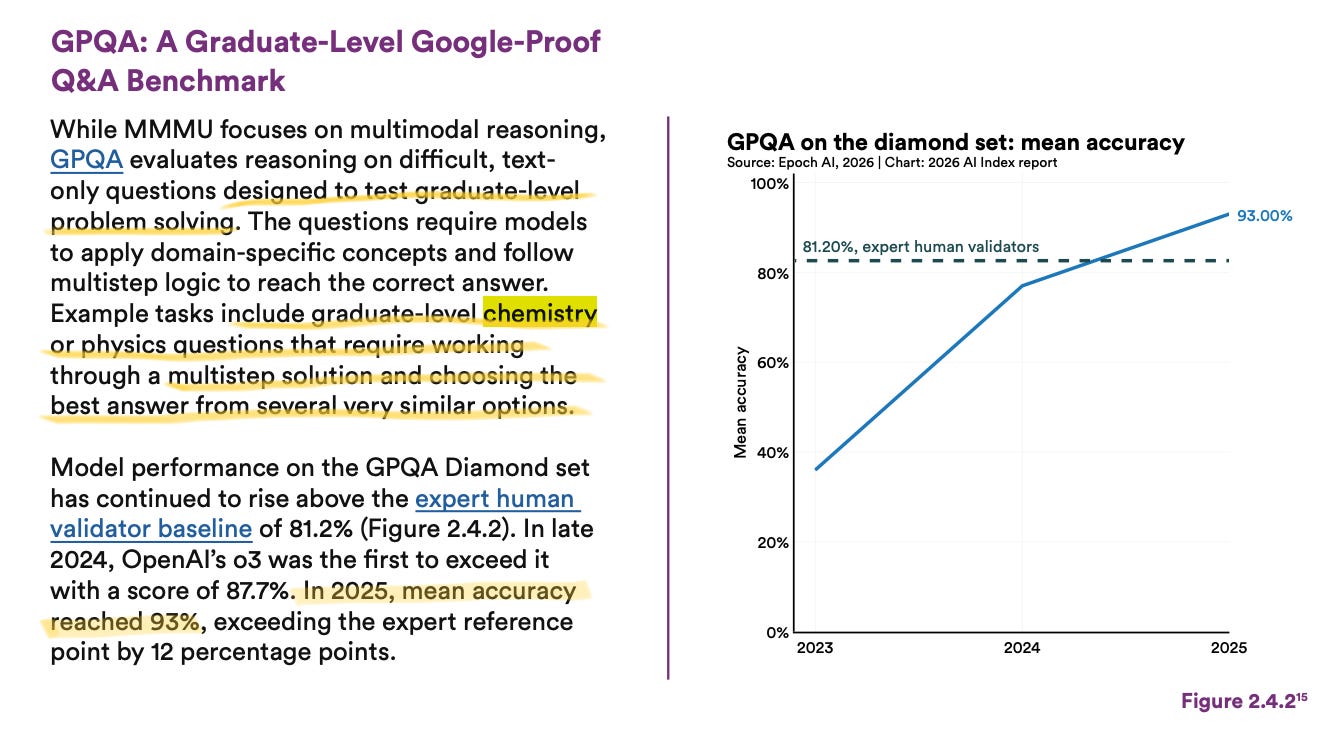

Some AI models perform very well on their benchmarks.

For example,

So it really depends on which benchmark you use.

But as a rule of thumb: put an AI agent in a real-world environment, test it on general day-to-day tasks, and it typically falls apart.

I read through all 400 pages of the 2026 Stanford AI Index and read my analysis.