1. OpenAI Wants to Be Your PE Firm’s AI Guy

OpenAI has raised $4 billion from 19 private equity firms and consultancies to start a new company staffed with ‘Forward-deployed engineers’. The job: help PE portfolio companies integrate AI (and to land themselves more b2b users)

By coincidence, or not so much coincidence, Anthropic also announced the exact same joint venture with friendly private equity funds. It’s funny how two AI labs announced the same business strategy on the same day.

I’m writing a deep dive on this, out Sunday. Keep an eye on it!

2. The U.S. Government Can Now Stress-Test AI Before It Ships

A Commerce Department agency (CAISI) has signed agreements with Google DeepMind, Microsoft, and xAI, giving the federal government access to frontier AI models for national security testing before public release.

→ NIST

In addition to its existing partnerships with OpenAI and Anthropic, the Commerce Department Agency will also review the homework of other AI labs. Do they really have the capability to do it, or is this just a stamp on a piece of paper?

3. Is xAI the Next CoreWeave?

SpaceX announced its xAI unit has struck a deal to supply computing capacity to Anthropic, following a similar deal with another company weeks earlier. xAI is quietly becoming an infrastructure play.

Elon Musk is like running a food truck that started with American, pivoted to Chinese, added Indian, and is now opening a French à la carte counter.

Two readings:

Either xAI, the model is quietly becoming a dead end, and this is the pivot.

Or he looked at CoreWeave’s business and decided the middleman margin was better than the frontier model race. Neither is reassuring for Grok.

4. AI Models Have Invented a Private Shorthand — and It’s 11x Cheaper

Instead of reasoning out loud in English before answering, a new training method teaches AI models to think in abstract tokens, a kind of internal shorthand the model develops for itself. As an end user, you still get a normal answer at the end. The result: 11x fewer reasoning tokens used during inference, with accuracy matching or beating standard verbal reasoning.

→ https://arxiv.org/abs/2604.22709

AI optimization is moving fast enough that today’s breakthrough is tomorrow’s baseline. Whether abstract reasoning tokens are the answer or just another stepping stone, this is positive in many ways, in saving energy and the mess created by sourcing lands for data-centers, for one.

5. Anthropic Wants to Replace Junior Analysts?

Anthropic has shipped ready-to-run Claude agent templates aimed at financial services teams: building pitchbooks and DCF models in Excel, drafting credit memos and PowerPoint decks, screening KYC files, and closing the books at month-end autonomously.

The media has reached another climax over the idea that junior jobs are f*cked by AI companies. However, based on what I read in the system card, even Anthropic’s latest model, Mythos, would still have a serious amount of hallucinations.

But keep in mind, being 80% correct in finance, 100% that gets you fired.

So is AI there yet?

Not quite.

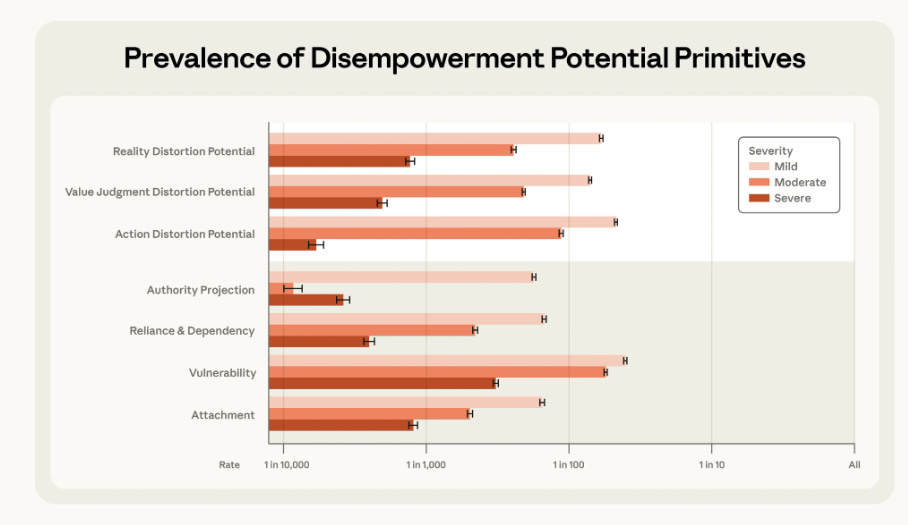

6. 1 in 1,300 Claude Chats Is Messing With Your Head

Anthropic’s own research found that roughly 1 in every 1,300 Claude conversations distorts users’ grip on reality: nudging beliefs, reinforcing delusions, or blurring the line between the model’s framing and the user’s own thinking.

1 in 1,300 seems small, right?

Not at all.

Claude has 19 million monthly active users. That’s at least 14,500 people per month whose sense of reality is swayed by chatbots.